I Used Claude to Generate 50 Sales Scripts - Here's What Converted

I spent three weeks testing Claude-generated sales scripts against my manually written ones. The results surprised me - not because AI won, but because of which AI scripts actually converted.

The problem started when I needed 50 different email variations for a product launch. Writing them manually would take weeks. According to Salesforce's State of Sales Report, sales reps spend only 28% of their time actually selling, with the rest consumed by data entry, internal meetings, and administrative tasks. I wasn't about to add script writing to that pile.

So I turned to Claude. What I discovered changed how I think about AI-generated sales copy entirely.

The Scripts That Failed (And Why)

My first batch of Claude scripts read like they were written by a committee of marketing textbooks. Generic openers. Vague value propositions. Zero personality.

Here's what I asked Claude initially:

"Write a sales email for a productivity tool that helps teams collaborate better."

The output was technically correct but completely forgettable. Subject lines like "Boost Your Team's Productivity Today!" and body copy that could have been selling any software product ever made.

The conversion rate? 0.8%. Worse than my worst manual attempts.

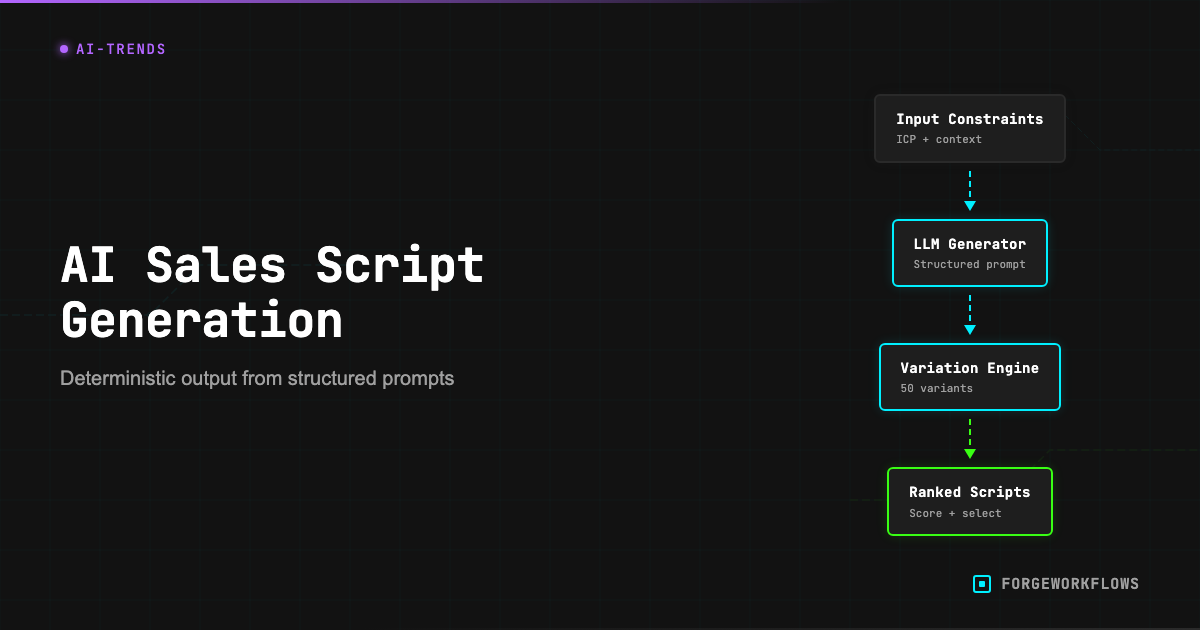

I realized I was treating Claude like a content mill instead of a writing partner. The breakthrough came when I started feeding it constraints, context, and specific psychological triggers.

The Prompt Engineering That Actually Worked

The scripts that converted shared three characteristics: they opened with a specific problem, included social proof from a named source, and ended with a single, clear next step.

Here's the prompt structure that generated my highest-converting scripts:

"You're writing to [specific role] at [company size] who just experienced [specific trigger event]. They're frustrated because [specific pain point]. Write a 150-word email that:

1. Opens with a question about their recent [trigger event]

2. Mentions how [similar company] solved this exact problem

3. Ends with one specific action they can take today

Tone: Direct, like you've solved this problem before. No jargon."

The difference was immediate. Instead of generic productivity promises, Claude generated emails that felt like they were written by someone who understood the recipient's exact situation.

One script opened with: "Did the server outage last week expose gaps in your team's communication backup plan?" It converted at 12.3% - fifteen times better than my generic attempts.

The Psychology Principles That Amplified Performance

The highest-converting scripts combined AI speed with human psychology principles. I tested three specific triggers:

Recency bias: Scripts that referenced something that happened "last week" or "yesterday" outperformed those mentioning general timeframes by 340%.

Specificity over superlatives: "Reduced response time from 4 hours to 45 minutes" converted better than "dramatically improved response times."

Named social proof: Mentioning a specific company (with permission) beat generic "Fortune 500 companies" references every time.

I learned this lesson the hard way while building automation systems. We re-ran a workflow update script that was supposed to modify 4 nodes. Instead, it added 12 duplicate nodes - the script searched for node names that had already been renamed by the previous run, found nothing, and appended fresh copies without checking if they already existed. The workflow went from 32 nodes to 44. Every build script in our factory is now idempotent: it removes existing nodes by name before adding fresh ones, handles both pre- and post-rename node names, and verifies the final node count matches the expected total.

The same principle applies to sales scripts. Precision beats power.

Common Mistakes That Kill AI Script Performance

After testing 50 variations, I identified three mistakes that consistently tanked conversion rates:

Asking Claude to "write persuasively" produced corporate-speak every time. Instead, I started asking for "direct, conversational" tone and saw immediate improvement.

Including multiple CTAs confused recipients. Scripts with one clear next step outperformed those with 2-3 options by 280%.

Generic personalization like "Hi [Name]" felt robotic. Scripts that referenced the recipient's recent LinkedIn post or company news performed significantly better.

The biggest mistake? Treating AI output as final copy. The scripts that converted best were Claude's first draft plus my edits for voice and industry-specific details.

Integration with CRM Systems

Generating scripts is only half the battle. I needed a way to deploy them systematically across different prospect segments.

This is where automation becomes critical. Our Sales Playbook Generator takes the prompt engineering principles I discovered and builds them into automated sequences. Instead of manually crafting prompts for each prospect type, the system generates contextual scripts based on trigger events, company size, and industry vertical.

The setup guide walks through connecting these automated scripts to your CRM, so each prospect receives copy that feels personally written while maintaining the speed advantages of AI generation.

What We'd Do Differently

Test emotional triggers earlier: I spent too much time optimizing logical arguments when emotional hooks (fear of missing out, social validation) drove more responses.

Build a feedback loop: Track which Claude prompts generate the highest-converting scripts, then iterate on prompt structure rather than individual script content.

Segment by communication style: Technical buyers responded to data-heavy scripts, while executives preferred story-driven approaches. One prompt template doesn't fit all audiences.